Move the box from the stand to the chair.

HomeRobot (noun): An affordable compliant robot that navigates homes and manipulates a wide range of objects in order to complete everyday tasks.

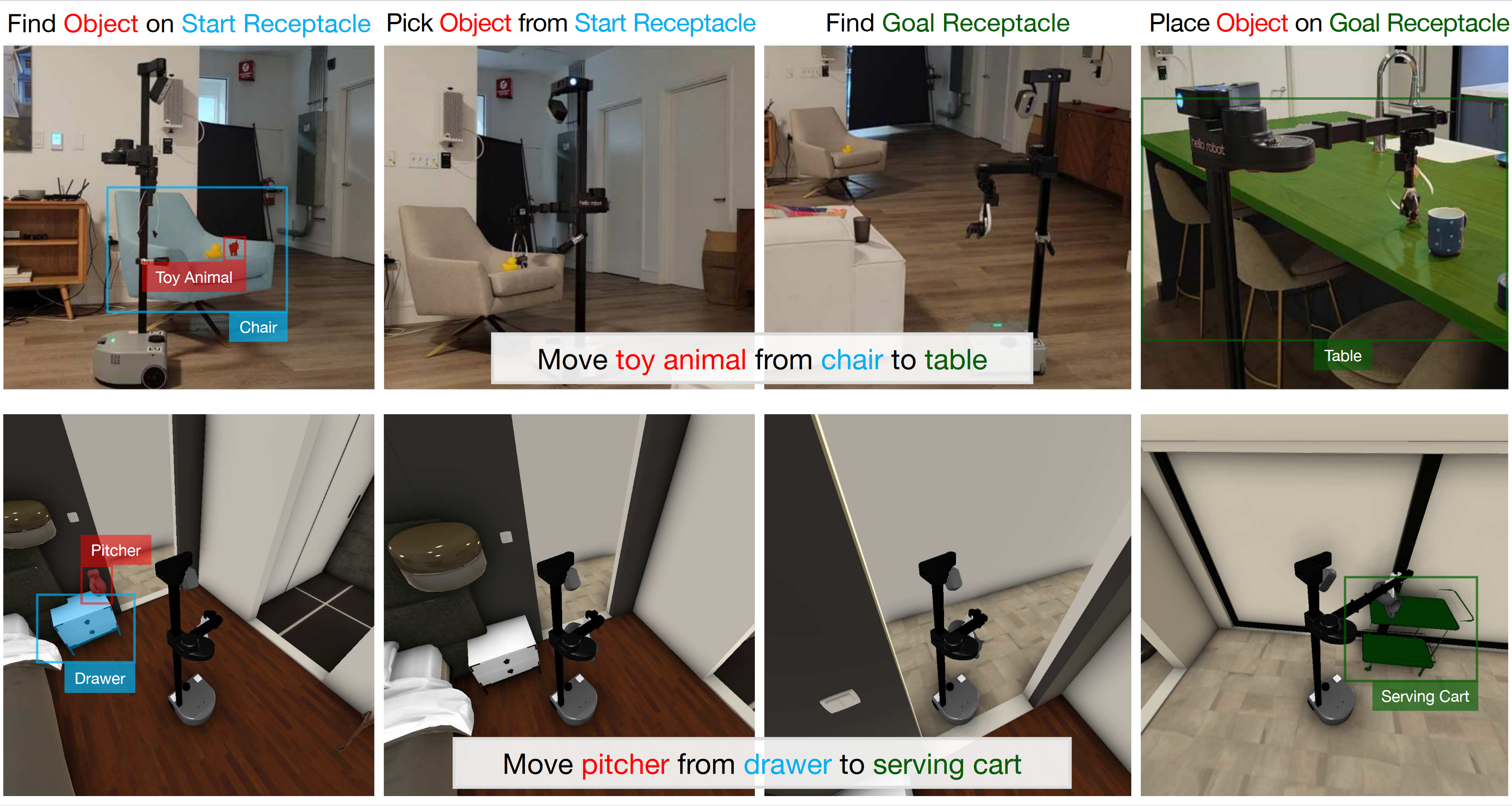

Open-Vocabulary Mobile Manipulation (OVMM) is the problem of picking any object in any unseen environment, and placing it in a commanded location. This is a foundational challenge for robots to be useful assistants in human environments, because it involves tackling sub-problems from across robotics: perception, language understanding, navigation, and manipulation are all essential to OVMM. In addition, integration of the solutions to these sub-problems poses its own substantial challenges. To drive research in this area, we introduce the HomeRobot OVMM benchmark, where an agent navigates household environments to grasp novel objects and place them on target receptacles. HomeRobot has two components: a simulation component, which uses a large and diverse curated object set in new, high-quality multi-room home environments; and a real-world component, providing a software stack for the low-cost Hello Robot Stretch to encourage replication of real-world experiments across labs. We implement both reinforcement learning and heuristic (model-based) baselines and show evidence of sim-to-real transfer. Our baselines achieve a 20% success rate in the real world; our experiments identify ways future research work improve performance.

We consider tasks of the form "move the <object> from the <start_receptacle> to the <goal_receptacle>," and instantiate them both in simulation and in the real world.

In simulation, we initally provide 50 scenes, with thousands of episodes and unique object instances. Objects are divided between seen and unseen categories: for example, at train time, we might see a cup, but we will not have seen the exact cup from our real world environments, and we will not have seen a toy elephant.

In the real world, we provide the HomeRobot library, which implements baseline methods for OVMM, as well as providing a real and simulated robotics stack. This robotics stack will allow researchers anywhere to get started on this exciting problem.

Move the box from the stand to the chair.

Move the multiport hub from the stool to the table.

Move the toy construction set from the table to the stool.

Here, the robot must find a stuffed animal on a chair and move it to the sofa. Neither the stuffed animal, the chair, nor the sofa have been seen before.

Likewise, in this case the robot has to find a small toy elephant - which could be anywhere in the apartment, but is known to be on a chair - and place it on the dining table.

Hello Robot: Builders of the Stretch robot which we use for HomeRobot. Check out their website for more information about the hardware we used, and for multiple guides on how to use your robot and take care of it.

NeurIPS 2023 HomeRobot: Open Vocabulary Mobile Manipulation (OVMM) Challenge: Compete on our new benchmark for a chance to win a Hello Robot Stretch!

@article{homerobot,

title = {HomeRobot: Open Vocabulary Mobile Manipulation},

author = {Yenamandra, Sriram and Ramachandran, Arun and Yadav, Karmesh and Wang, Austin and

Khanna, Mukul and Gervet, Theophile and Yang, Tsung-Yen and Jain, Vidhi and Clegg,

Alexander William and Turner, John and Kira, Zsolt and Savva, Manolis and Chang,

Angel and Chaplot, Devendra Singh and Batra, Dhruv and Mottaghi, Roozbeh and Bisk,

Yonatan and Paxton, Chris},

year = {2023},

url = {https://github.com/facebookresearch/home-robot},

}